To understand ultimately what HaptX (formerly AxonVR) is offering users, let’s first look at the gloves’ design and functionality, and then in closing, what this means for engineering—particularly automotive.

Design and Functionality

While haptic feedback has been set to complement VR since it was first introduced in the ’70s, HaptX Inc. goes a step further than the traditional source of tactile responses, which are limited to motor-induced vibrations (think VR haptic suit hardware, for instance).

As the next two subsections cover respectively, the Seattle-based engineers have collectively used 1.5 mm-thick microfluidic ‘skin’, pneumatic actuators, and an exoskeletal hand to “let you feel the shape, movement, texture, and weight of virtual objects”, to quote HaptX’s website.

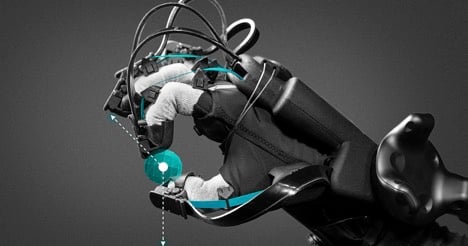

A VR spider crawls over the user’s right-hand HaptX Glove, whose use of actuators and exoskeletal technology allow the wearer to ‘feel’ the intricacies of such an experience. Image courtesy of HaptX.

HaptX’s Microfluidic Actuators

Nowadays, with immersive technology becoming all the more prominent as a means to inform engineers’ design and manufacturing processes (particularly in the automotive industry, as discussed later), users need to realistically feel that they are in their workshop simulation. And this is where HaptX’s patented microfluid-based haptics come to the fore.

Rather than utilising the said traditional motor-based feedback, the gloves use hydraulics and microscopic (namely anywhere in-between a billionth or a quadrillionth of a litre-small) concentrations of liquid.

To specify the process of microfluidics, it is the study, and of course technology, related to the use of liquid (in this case water) at the sub-millimetre level.

Accordingly, the HaptX Gloves are smart textiles that use tiny channels to carry their flow of microscopic water as a means to hydraulically control—via the HaptX control console’s valve array (links to photo)—the glove’s 130 embedded tactile actuators (or ‘tactors’, to use HaptX’s portmanteau).

This is by creating air bubbles that push against the user’s skin as a means to represent texture, especially hardness or softness: the harder the virtual object (e.g. a vehicle), the more the air bubbles dent the wearer’s skin (anywhere in-between 0.5 mm and 2 mm of displacement, as per the device’s patent), and vice versa.

An example of one of the HaptX Gloves’ fingertip-covering ‘tactor’ panels (naturally, palm-of-the-hand actuators are required, too). Image courtesy of HaptX.

The tech pioneers take pride in the lack of latency and overall versatility involved. As HaptX’s EE manager Jeffrey Damelio explains, it is (if only comparatively) a forgiving task to accurately time the electrical controls of the said valve array: we respond faster to audiovisual (especially audio) stimuli than that of touch, after all.

HaptX’s CEO and founder Jake Rubin likens the panel to a display screen: the tactors are essentially like TV pixels, in that they are both media that responsively and dynamically change their output (whether it be soft or hard colours, or soft or hard textures) based on what their interface and users call for.

Image courtesy of HaptX.

HaptX’s Exoskeletal Hand

Despite all the ingenuity of the above tactile feedback tech, it still doesn’t explain what happens if the user touches virtual objects that represent immovably, impenetrably heavy and hard fixtures (e.g. a building).

This is where force feedback is required—to offer resistance which keeps the user’s arm movements from essentially ‘piercing’ solid items in the VR simulation.

With this in mind, HaptX needed to complement the mild push of the microfluidic, hydraulic-based tactile feedback with a large pull (up to 4 lbs’ worth) from the gloves’ embedded (and ultra-lightweight) exoskeletal hand.

But while electromechanically reining in the user’s hand motions is necessary, the challenge is in programming the HaptX Gloves to respond at the right time and position, in relation to the virtual space.

The mechanics of this are more clear-cut when the VR object represents a permanent fixture (such as the below-pictured barn): simply put, the harder the user pushes against the building, the more the exoskeleton holds back their hand.

But even this requires intricate tracking hardware and software (discussed shortly) for the electromechanics to correctly respond when the user’s hand is ‘touching’ the fixture (linked video starts at 2:43). What’s all the more impressive still, is that the exoskeleton even responds accurately to its wearer contacting, not only moving, but aerial objects, too.

Consider, for instance, that HaptX’s VR farmyard simulation culminates in a UFO invasion, as pictured below.

The user prepares to strike the VR UFOs while wearing HaptX Gloves. Image courtesy of HaptX.

The user is able to feel the force of striking the moving aerial objects and experience the exoskeleton’s subtle-yet-significant pull-back.

This is required to represent, and balance, the two opposing forces at play: the virtual flying saucers’ physical resistance (by way of being solid), and meanwhile, the ability of the user’s arms to still follow through in the air, as the UFOs are batted away.

Such accuracy is made possible by the HaptX Glove’s magnetic motion tracking, which to quote their website, “leverages proprietary software and electronics to deliver sub-millimetre accuracy hand tracking with six degrees of freedom per finger”.

Such localisation technology is all the more distinct given that no coding is required to train HaptX’s software to know where your hand should exist in the virtual space: it generates your (disembodied) hand avatar both accurately and automatically.

Image courtesy of HaptX.

What This All Means for Engineering—Particularly Automotive

It’s clear that the above design and functionality accomplishments that HaptX are bringing to the table are second to none; but the question remains: ‘Outside of the obvious breakthroughs in immersion that gaming and other entertainment will enjoy, what advantages will the accuracy of the HaptX Gloves bring to the more occupational side of immersive computing?’

The answer is in the form of the HaptX Gloves Development Kit, which allows industry professionals, not the least of which EEs and applications engineers, to benefit from developers programming into the HaptX system occupationally-specific VR simulations. This will either be through supported plugins Unity, Unreal Engine 4, or a low-level C++ application programming interface.

As Jake Rubin explains, this means that "leading automotive and aerospace companies can touch and interact with their vehicles before they are built, radically reducing time and cost for design iterations".

Such tactile intuitiveness is indeed an industry breakthrough. Anyone who has ever used a VR controller that lacks haptic functionality will agree that it is outright disorienting to try to control their virtual objects, not least in the complex field of automotive design and manufacture.

Nissan, who are the first automakers in Japan to enjoy the HaptX Gloves, would certainly agree: they have already made their EVs, Nissan Leaf and Nissan IMs, available as touchable virtual designs.

HaptX maintains that the marriage of automotive production solutions and tactile immersive computing is the breakthrough that concept car designs need, and it’s easy to see the significance of this when you consider the traditional concept-to-prototype timespan. “It takes years,” explain HaptX’s press team, “between creating the first 3D model to sitting in the driver’s seat of a complete physical prototype. HaptX Gloves can reduce that time ... to days”.

Indeed, the ability for all manner of engineers to realistically experience a car’s controls, from opening the glove compartment to checking the steering, before as much as a physical prototype even exists, makes an unmistakable stride in manufacturing—and not just in automotive.

For more information on haptic technology, check out EP's news piece: 'TDK and Boréas Collaborate on Smart Haptic Solutions'.